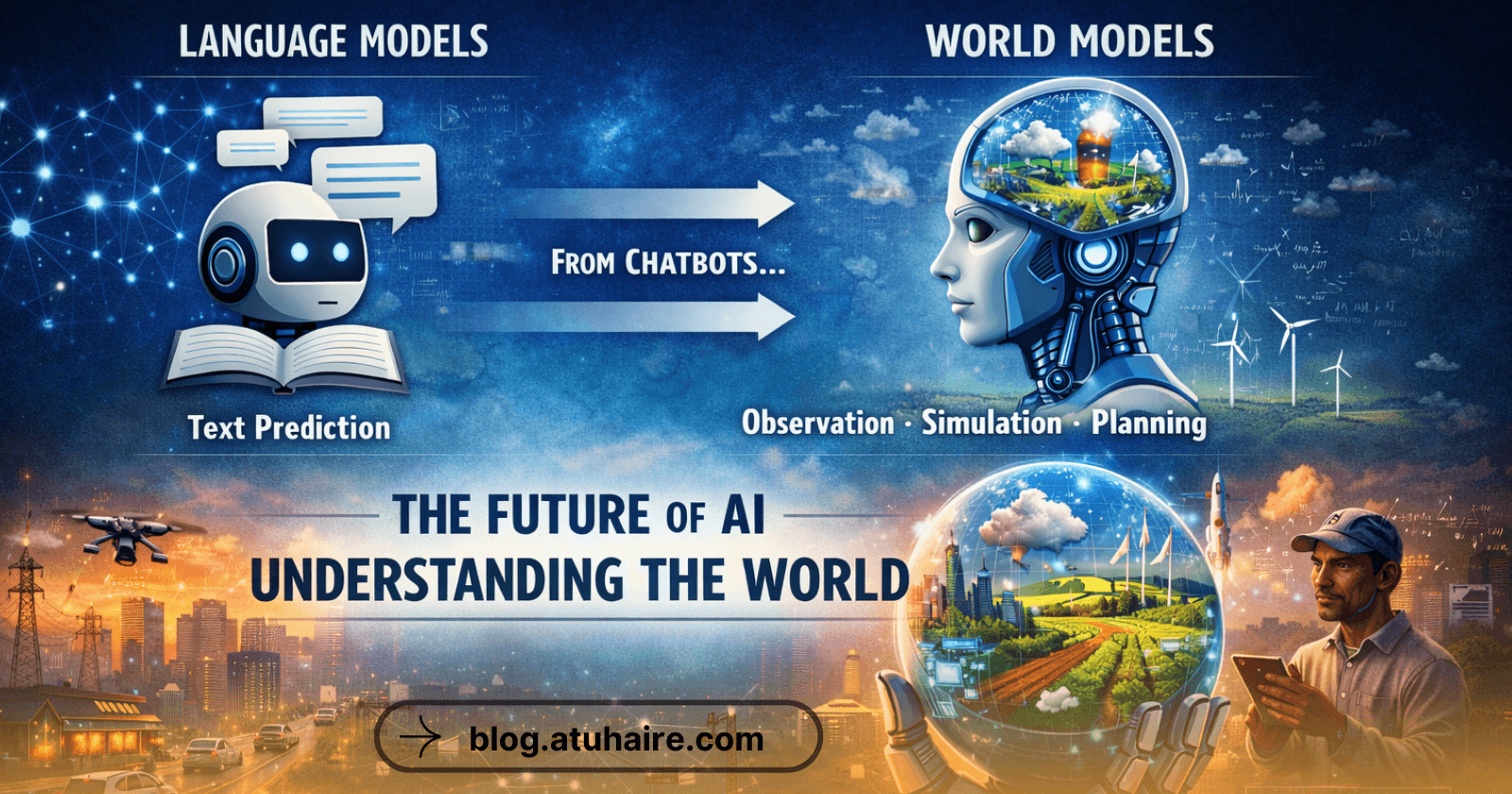

From Language Models to World Models: Why the Future of AI Is About Understanding Reality

My name is Ronnie & a fellow geek like you😎. I am passionately tech curious.

I blog, tweet, write, code, vlog, discuss and eat anything about tech.

😍 Feel Free To Connect 😍

Imagine This…

Imagine an AI system that doesn’t just answer questions, but understands the world well enough to predict what will happen next.

Not just what words come after another word,

but what happens after it rains,

what happens when traffic builds up,

what happens when resources are scarce,

or what happens when a decision is made.

This is the idea behind World Models: a concept gaining serious attention as researchers begin to acknowledge a hard truth:

Predicting language alone is not enough to build truly intelligent systems.

The Limits of Large Language Models (LLMs)

Large Language Models (LLMs) like GPT, Claude, or Gemini have transformed how we interact with computers. They are excellent at:

Generating text

Summarizing information

Writing code

Conversational reasoning

However, at their core, LLMs are trained to predict the next token in a sequence.

They learn patterns in text, not necessarily cause and effect in the real world.

This leads to clear limitations:

They can sound confident while being wrong

They struggle with long-term planning

They lack grounded understanding of physics, space, time, and consequences

In short, LLMs talk well, but they don’t truly understand the world they describe.

Yann LeCun’s Vision: World Models

Yann LeCun, Meta’s Chief AI Scientist and a pioneer of modern deep learning, has been vocal about this gap.

He defines World Models as:

“AI systems designed to learn internal representations of how the world works.”

Just like humans do.

Humans don’t learn primarily by reading text.

We learn by observing, acting, predicting outcomes, and updating our understanding when we’re wrong.

LeCun summarizes the problem clearly:

“Predicting tokens is not enough. We need models that build internal simulations of the world, just like humans do.”

Observation, Simulation, and Planning

World Models aim to give AI three core abilities:

1. Observation

The ability to perceive the environment, through vision, sensors, data streams, or interactions.

For example:

Watching traffic patterns

Observing weather changes

Monitoring human behavior or system states

2. Simulation

The ability to internally simulate what might happen next.

This is critical. Instead of reacting, the system can ask:

If I do X, what is the likely outcome?

What are the second- and third-order effects?

Humans do this constantly, often subconsciously.

3. Planning

Based on simulations, the system can choose actions that lead to better outcomes.

This is the foundation of:

Robotics

Autonomous systems

Decision-making AI

True AI agents

Self-Supervised Learning and JEPA Models

A key technical idea behind World Models is self-supervised learning.

Instead of relying on massive labeled datasets, the system learns by:

Predicting missing parts of observations

Comparing expected outcomes to actual outcomes

Learning representations without explicit human instructions

This philosophy underpins JEPA (Joint Embedding Predictive Architecture): a framework proposed by LeCun.

JEPA models:

Learn abstract representations of the world

Avoid predicting raw pixels or tokens directly

Focus on learning meaningful latent structure

This approach is far closer to how humans and animals learn, through interaction and prediction, not supervision.

Why World Models Matter (Especially Beyond Silicon Valley)

World Models unlock capabilities that matter deeply in real-world contexts:

Robotics: Machines that understand space, balance, and cause-effect

Autonomous systems: Vehicles and drones that plan safely

Climate & agriculture: Predicting outcomes before acting

Infrastructure & cities: Anticipating failures instead of reacting

Healthcare: Modeling patient trajectories over time

For regions like Africa, this shift is especially important.

World Models can help AI:

Understand local environments

Handle uncertainty and scarce data

Adapt to complex, real-world conditions

Move beyond “text-only” intelligence

Read More about the Paper here → https://arxiv.org/abs/2301.08243

Subscribe if you have enjoyed this article! See You on the next one!